The Future of Trust: AI & Cybersecurity in Financial Services 2026

Artificial intelligence is no longer a peripheral innovation topic in financial services. It is becoming part of the operational core of banking, insurance, and fintech. Over the next several years, the most important strategic question will not be whether institutions should adopt AI. It will be whether they can scale AI without weakening trust.

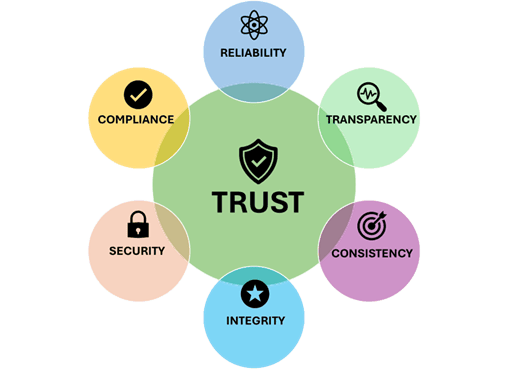

That distinction matters because trust in financial services is different from trust in most other industries. In banking and insurance, trust is tied to decision quality, security, regulatory discipline, data stewardship, and continuity of service. Customers may tolerate a poor digital experience from a retailer. They are far less forgiving when a bank fails to stop fraud, when an insurer cannot explain a decision, or when a fintech platform exposes sensitive data. As AI becomes embedded in fraud detection, underwriting, claims handling, customer service, identity verification, and internal operations, the technology starts to shape not just efficiency, but legitimacy.

From 2026 to 2030, trust will increasingly be determined by how institutions combine AI capability with cybersecurity maturity and governance discipline. The winners will not simply be the firms with the most advanced models. They will be the firms that can prove their systems are resilient, their decisions are controlled, and their innovation is defensible under scrutiny from customers, boards, and regulators.

Why trust is becoming a strategic infrastructure layer

Trust has always been essential in financial services, but it is now evolving from a brand attribute into an infrastructure layer. In the past, institutions could build trust primarily through reputation, scale, long term stability, and product reliability. In the next phase of digital transformation, those factors will remain important, but they will no longer be sufficient.

Trust is now linked to decision integrity

As AI participates more directly in decisions involving credit, fraud, claims, pricing, and customer interactions, trust becomes inseparable from decision integrity. Institutions will be judged by whether their systems produce outcomes that are accurate, explainable, consistent, and fair. A fast decision that cannot be justified is not a trustworthy decision. A highly automated process that introduces hidden bias or inconsistent outcomes creates reputational and regulatory exposure, even if it improves short term productivity.

Trust must hold under pressure

In financial services, trust is tested most severely when conditions become difficult. During fraud spikes, cyber incidents, market volatility, or operational disruption, institutions must demonstrate that their controls continue to function. This is why trust increasingly depends on resilience rather than messaging. A company is not trusted because it claims to be secure. It is trusted because it can sustain secure and reliable operations when conditions are adverse.

AI is expanding both value creation and risk concentration

The financial sector has good reason to accelerate AI adoption. The technology can improve signal detection, reduce manual workloads, increase response speed, and support better operational prioritization. In fraud operations, AI can identify suspicious patterns more effectively than static rule sets alone. In insurance, it can streamline claims triage and support more consistent assessments. In customer operations, it can improve service quality and reduce handling time.

Yet the same technology also concentrates risk in new ways.

Wrong decisions can scale faster than before

A traditional process failure may affect one team, one region, or one stage of a workflow. An AI enabled process can affect thousands of decisions before issues are detected. Model drift, weak training data, poor governance, and inadequate oversight can turn localized weaknesses into systemic operational problems. In this sense, AI does not merely introduce new technology risk. It changes the scale and speed at which risk can propagate.

The attack surface is becoming more complex

AI systems create new exposure points across data pipelines, model interfaces, orchestration layers, third party dependencies, and user interaction channels. Sensitive information may be exposed through poorly governed workflows. Models may be manipulated indirectly through adversarial inputs or poisoned data. Output reliability may degrade over time without clear operational signals. At the same time, criminal actors are also using AI to improve phishing, identity spoofing, social engineering, and synthetic fraud.

For banking, insurance, and fintech leaders, this means AI risk cannot be treated as a niche technical concern. It is a strategic issue spanning cyber, operations, compliance, customer trust, and executive accountability.

Cybersecurity is moving from perimeter defense to adaptive resilience

Cybersecurity in financial services has already been evolving for years, but AI is accelerating the need for a more adaptive model. Static controls, isolated monitoring practices, and heavily manual investigation processes are increasingly misaligned with the pace of modern threats. The next phase will depend on combining AI enabled detection with disciplined control architectures.

Detection must become faster and more contextual

In complex financial environments, security teams are overwhelmed not only by threat volume, but by noise. AI can support more effective signal prioritization by correlating behavior across identity, transactions, devices, channels, and network activity. This allows institutions to detect anomalous behavior with greater context and respond more precisely. The value is not simply automation. It is decision support at a speed that matches the threat environment.

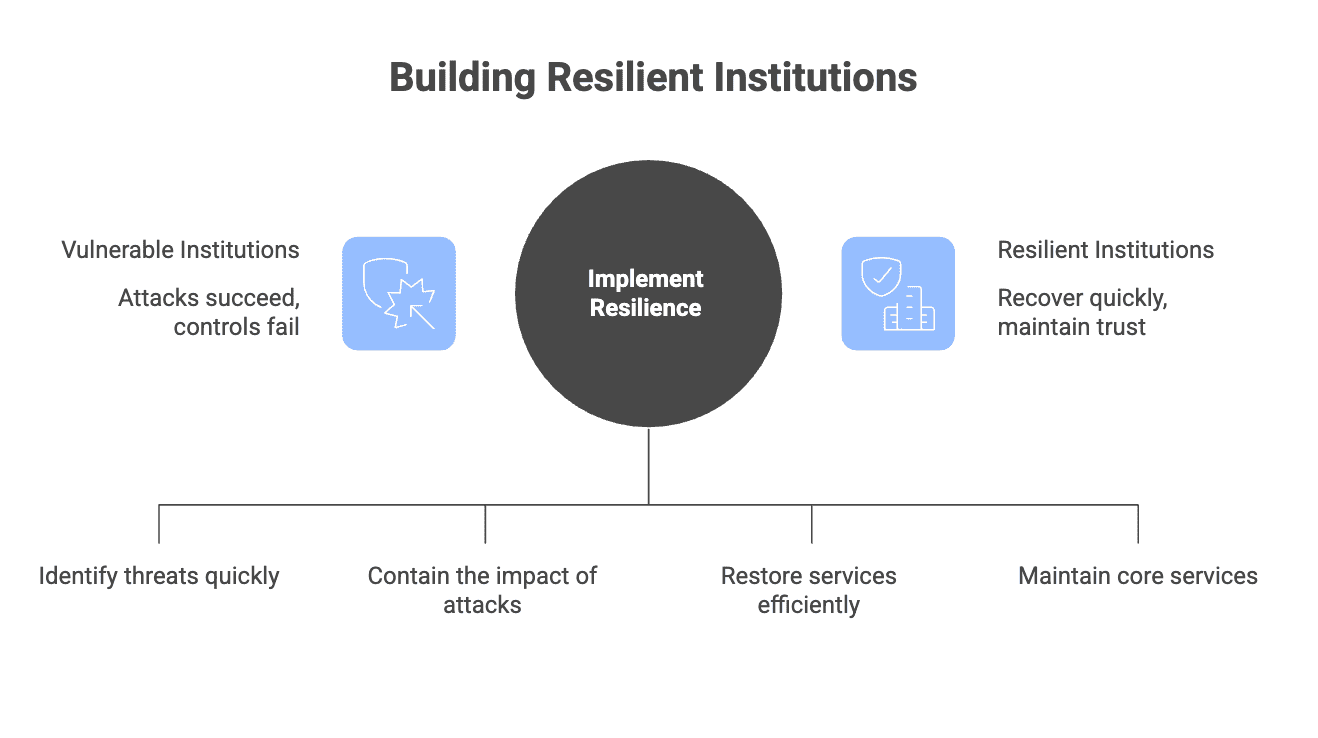

Resilience matters as much as prevention

Prevention remains critical, but it is no longer enough as the central organizing principle. Institutions must assume that some attacks will succeed, some controls will fail, and some disruptions will occur. The differentiator is therefore resilience, meaning the ability to detect early, isolate quickly, recover with discipline, and protect core customer journeys throughout the incident lifecycle.

In highly regulated sectors, resilience also has a trust dimension. Customers and regulators are not evaluating only whether an incident occurred. They are evaluating how the institution responded, whether critical services remained available, and whether decision making remained controlled during the disruption.

Governance will determine whether AI strengthens or erodes trust

Much of the current market conversation still treats AI governance as a compliance exercise. In reality, governance is becoming a central operating requirement. Without it, institutions may achieve rapid deployment but fail to create durable trust.

Data governance is the first control boundary

Every AI system inherits the strengths and weaknesses of its data environment. If institutions cannot establish data quality, access discipline, lineage visibility, and clear handling rules for sensitive information, they will struggle to build reliable AI systems. In financial services, this is especially important because data is not just an asset. It is often legally sensitive, commercially critical, and deeply tied to customer identity.

Model governance must be operational, not theoretical

Model governance cannot stop at documentation and validation at launch. It must extend into live monitoring, performance review, drift detection, and escalation processes. Institutions need to know when a model is no longer performing within acceptable boundaries and what actions follow when that happens. A model that performs well in testing but cannot be governed in production is not enterprise ready.

Human oversight remains essential in high consequence decisions

There is a temptation to frame mature AI adoption as a journey toward full autonomy. In financial services, that view is often too simplistic. For decisions involving fraud escalation, credit outcomes, claims disputes, customer remediation, or compliance exposure, meaningful human oversight remains necessary. Oversight is not a sign of technological weakness. It is a control requirement in environments where accountability cannot be delegated to a model.

The operating model must change, not just the technology stack

One of the biggest barriers to trustworthy AI adoption is organizational design. Many institutions still separate innovation, cybersecurity, risk, data, and compliance into parallel functions with limited integration until late in the delivery process. That structure may have been manageable when AI use cases were isolated pilots. It is not sustainable when AI becomes part of core operations.

Trust requires cross functional design from the start

High impact AI use cases should not be designed by a single function and reviewed later by others. They should be shaped from the beginning by business owners, cyber teams, data leaders, risk managers, and compliance stakeholders. This does not slow innovation when done well. It reduces expensive rework, lowers deployment risk, and increases the likelihood that a use case can scale responsibly.

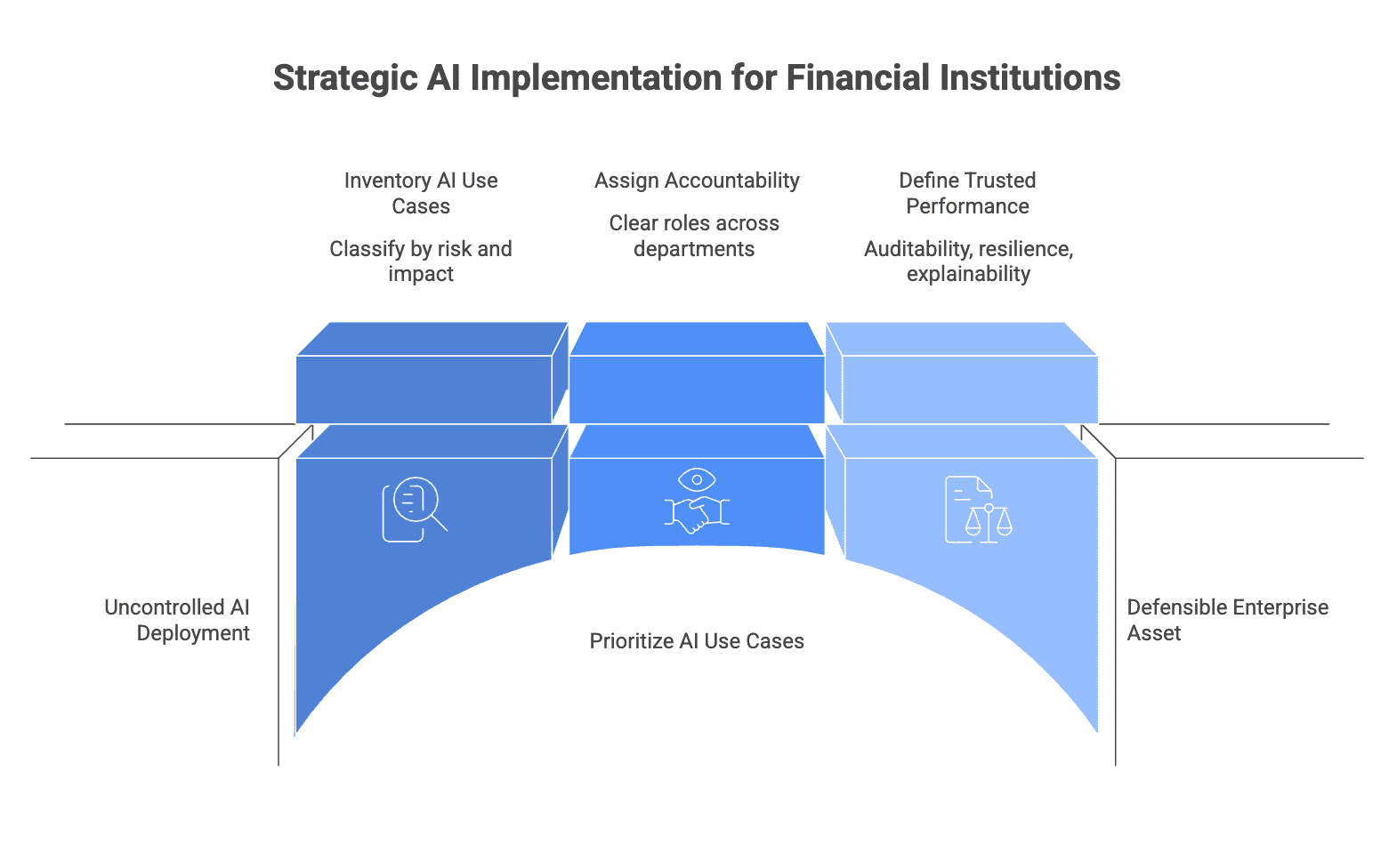

Not every AI use case should be governed the same way

A useful operating principle for the next several years is risk tiering. Institutions should distinguish between low consequence use cases, such as internal productivity support, and high consequence use cases, such as decision support involving customers, money movement, or regulated processes. The control model should be calibrated accordingly. This is how organizations avoid two common failures, under controlling high risk systems and over controlling low risk ones.

Where financial institutions should focus now

The most effective strategy is not to launch AI everywhere at once. It is to prioritize use cases where business value and control maturity can advance together. Fraud detection, customer authentication, AML support, underwriting assistance, claims triage, and cyber alert prioritization are strong areas because they offer measurable impact while also revealing how governance performs in practice.

Institutions should begin by inventorying active and planned AI use cases, classifying them by risk and customer impact, and assigning clear accountability across business, cyber, data, and compliance. They should define what trusted performance means, not only in terms of return on investment, but in terms of auditability, resilience, explainability, and operational control. This is the discipline that turns AI from a promising capability into a defensible enterprise asset.

Conclusion

From 2026 to 2030, financial institutions will not struggle to access AI. They will struggle to deploy it in ways that remain secure, explainable, resilient, and trustworthy under real world pressure. In banking, insurance, and fintech, trust will increasingly be shaped by the quality of the connection between AI, cybersecurity, and governance.

This is why the future of trust is not just a technology story. It is an institutional design story. The organizations that lead in the next era will be those that understand a simple but demanding truth. In financial services, innovation creates value only when control keeps pace.

Contact Us

Connect with sourceCode

If you found this message inspiring and wish to stay updated with our expert insights, be sure to Follow sourceCode on LinkedIn today!

Additionally, if you are interested in strategic partnerships, innovative technology solutions, or exciting career opportunities, don't hesitate to visit our official website: sourcecode.com.au